Intel Xeon Sapphire Rapids: How To Go Monolithic with Tiles

by Dr. Ian Cutress on August 31, 2021 10:00 AM ESTThe March of More Silicon: Connectivity Matters

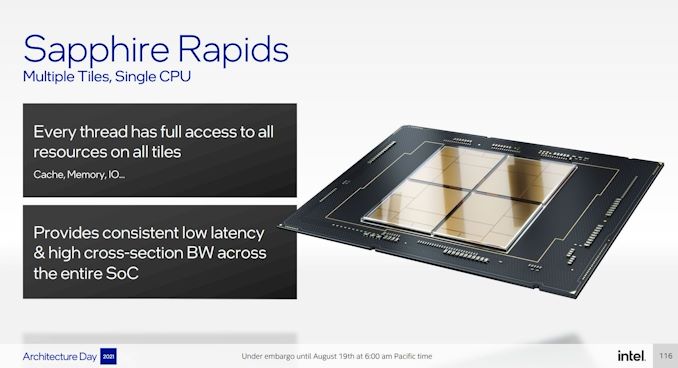

To date, all of Intel’s leading-edge Xeon Scalable processors have been monolithic, i.e. one piece of silicon. Having a single piece of silicon has its advantages, namely a fast in-silicon interconnect between cores but also a singular power interface to manage. However, as we move to smaller and smaller process nodes, having one large piece of silicon has downsides: they are hard to manufacture in volume without defects, which increases cost if you want the high-core count models, but also it ends up being limiting.

The alternative to a large monolithic design is to cut it up into smaller bits of silicon and connect them together. The main advantages here is better silicon yield, but also configurability by having different silicon for different functions as needed. With a multi-die design, you can ultimately end up with more silicon than a monolithic design can provide – the reticle (manufacturing) limit for a single silicon die is ~700-800 mm2, and with a multi-die processor several smaller silicon dies can be put together, easily pushing over 1000mm2. Intel has stated that each of its silicon tiles are ~400 mm2, creating a total around ~1600mm2. But the major downside to multi-die designs is connectivity and power.

The simplest way to package two chips in a substrate together is through intra-substrate connections, or what essentially amounts to PCB traces. This is a high-yielding process, however it has the two drawbacks listed above: connectivity and power. It costs more energy to send a bit over a PCB connection than it does through silicon, but also the bandwidth is much lower because the signals cannot be as densely packed. As a result, without careful planning, a multi-die connected product will have to be aware of how far data is at any one time, an issue few monolithic products have.

The way around this is with a faster interconnect. Rather than putting that connectivity through the substrate, through the package, what if it was through silicon anyway? By placing these connected dies on a piece of silicon, such as an interposer, the connectivity traces have better signal integrity, and better power. Using an interposer, this is commonly referred to as 2.5D packaging. It costs a bit more than standard packaging technology (there’s also scope for active interposers with logic), but we also have another limitation in that the interposer has to be bigger than all the silicon put together. But overall, this is a better option, especially if you want your multi-die product to act as if it were monolithic.

Intel decided that the best way to beat the downsides of interposers but still get the benefits of an effective monolithic silicon design was instead was to create super small interposers that lived inside the substrate. By pre-embedding them in the right location, with the right packaging tools two chips could be placed on this small Embedded Multi-Die Interconnect Bridge (EMIB), and voilà, a system that works as close to a monolithic design as is physically possible.

Intel has worked on the EMIB technology for over a decade. The development has had three major milestones from our perspective: (1) being able to embed the bridge into a package with a high yield, (2) being able to place big silicon die on the bridge at high yield, and (3) being able to put two high-powered die next to each other on a bridge. It is that third part that I think Intel has struggled with the most – by having two high-powered die next to each other, especially if the die have different coefficients of thermal expansion and different thermal properties, there is the potential of weakening the substrate around the bridge or the connections to the bridge itself. Almost all of Intel’s products that used EMIB so far have been around connecting a CPU/GPU to high-bandwidth memory, which is an order of magnitude lower power than what it’s being connected to. Because of that, I wasn’t convinced putting two high-powered tiles together possible, at least until Intel announced a multi-die FPGA connected by EMIB using two high-powered FPGA tiles in late 2019. From that point on, it was only a moment in time before Intel enabled the technology on its CPU product stack. We’re finally getting that with Sapphire Rapids.

10x EMIB on Sapphire Rapids

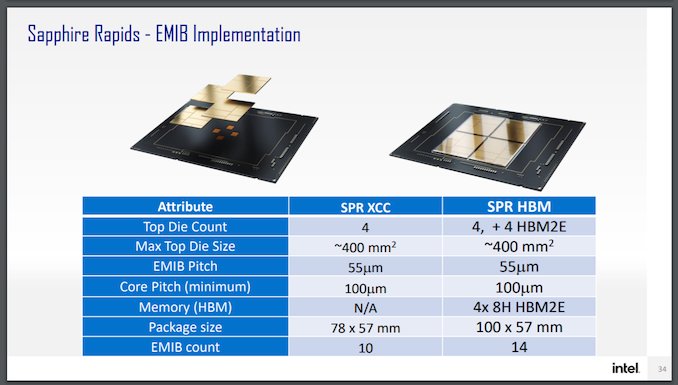

Sapphire Rapids is going to be using four tiles connected with 10 EMIB connections using a 55-micron connection pitch. Normally you might think that a 2x2 array of tiles would need equal EMIBs per tile-to-tile connection, so in this case with 2 EMIBs per connection, that would be eight – why is Intel quoting 10 here? That comes down to the way Sapphire Rapids is designed.

Because Intel wants SPR to look monolithic to every operating system, Intel has essentially cut its inter-core mesh horizontally and vertically. That way each connection through the EMIB is seen purely as the next step on the mesh. But Intel’s monolithic designs are not symmetric in either of those dimensions – usually features like the PCIe or QPI are on the edges, and not in the same place in every corner. Intel has told us that in Sapphire Rapids, this is similarly the case, and one dimension is using 3 EMIBs per connection while the other dimension is using 2 EMIBs per connection.

By avoiding strict rotational symmetry in its design, and without a central IO hub, Intel is leaning heavily to acting as a monolithic die – leaning so heavily it’s almost falling over to do so. As long as the EMIB connections are consistent between tiles, software shouldn’t have to worry, although until we get further details here, it’s hard to speculate exactly why without going through the motions of trying to figure out Intel’s mesh designs and how the extra parts all connect together. SPR sounds like a monolithic design cut up, rather than a ground-up multi-die design, if that makes sense.

Intel announced earlier this year that it will make an HBM version of Sapphire Rapids, using four HBM tiles. These will also be connected by EMIB, one per tile.

Tiles Tiles Tiles

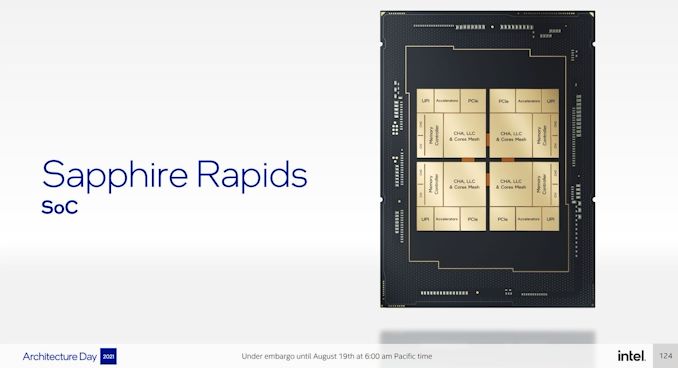

Intel did give an insight into what exactly each of the separate tiles will have inside it, however this was extremely high level:

Each tile has:

- cores, cache, and mesh

- A memory controller with 2x64-bit DDR5 channels

- UPI links

- Accelerator links

- PCIe links

In this situation, and throughout the presentation, it looks like all four tiles are equal, with the rotational symmetry I mentioned above. To make silicon that does this, in the way presented, isn’t as easy as mirroring the design and printing that onto a silicon wafer. The crystal plane of the wafer limits how designs can be built, and so any mirroring has to be redesigned completely. As a result, Intel confirmed that it has to use two different sets of masks to build Sapphire Rapids, one each for the two dies it has to make. It can then rotate each of these two dies to build the 2x2 tile grid as shown.

It’s worth comparing this to AMD’s first-generation EPYC, which also used a 2x2 chiplet method, albeit with connectivity through the package. AMD escaped the need for having multiple silicon designs by having it rotationally symmetric – AMD built four die-to-die interfaces on the silicon, but only used three for each rotation. It’s a cheaper solution (and one that was right for AMD’s financial situation at the time) at the cost of die area, but also enables a level of simplicity. AMD’s central IO die method in newer EPYCs moves away from this issue entirely. From my perspective, it’s something Intel is going to have to move towards if they want to scale beyond SPR but also for a different reason.

As it stands, each of the tiles holds 128-bits of DDR5 memory interfaces, for a total of 512-bits across all four tiles. Physically, this means we will see eight 64-bit memory controllers* for either eight or sixteen memory modules per socket in a system. That’s perfectly fine for versions of Sapphire Rapids with all four compute tiles.

However, we know that the Sapphire Rapids processor offering is going to have to scale down to fewer cores. In the past, Intel would create three different silicon monolithic variations to cater for these markets and optimize silicon output, but all the processors would have the same memory controller count.

This means that if SPR is going to offer versions with fewer cores, it is going to either create dummy tiles without any cores on them, but still keep the PCIe/DDR5 as required, or quite simply those lower core counts are going to have fewer memory controllers. That’s going to be a pain for system manufactures who want to build catch-all systems, because they’re going to have to build for both extremes.

The other alternative is that Intel has monolithic versions of SPR with all 8 memory channels for lower core count designs. But at this time, Intel has not disclosed how it is going to cater to those markets.

*technically DDR5 puts two 32-bit channels on a single module, but as yet the industry doesn’t have a term to differentiate between a module with one 64-bit memory channel on it vs. a module with two 32-bit memory channels on it. The word ‘channel’ has often been interchangeable with ‘memory slot’ to date, but this will have to change.

94 Comments

View All Comments

SystemsBuilder - Tuesday, August 31, 2021 - link

page 1, Golden Cove: A High-Performance Core with AMX and AIA, text under the AMX picture:"AMX uses eight 1024-bit registers for basic data operators" should be 1024 BYTE (or 1KByte) not 1024-bit.

AMX has 8 (row/column) configurable 1KB so called T registers, i.e. the 8 T registers can be configured to use a maximum size of 1KByte each but can also be smaller configured by row and columns parameters (you set tile configuration for each tile with the STTILECFG assembly instruction: i.e. row, columns, BF16/INT8 data type etc).

For more details see AMX section in this document:

https://software.intel.com/content/www/us/en/devel...

SystemsBuilder - Tuesday, August 31, 2021 - link

Cant edit so have to use a comment to clarify: LDTILECFG is used for setting the tile file configuration of all 8 tiles (# of rows and # columns per T register, while Data type is not set by this instruction) while STTILECFG is used for reading out the current tile file configuration and store the read out store that in memory.Ian Cutress - Tuesday, August 31, 2021 - link

My slide from Intel architecture day says 1 Kb = 1 kilo-bit. It literally says that in the slide above the paragraph you're referencing.So either a typo in the slide, or a typo in the AMX doc.

SystemsBuilder - Tuesday, August 31, 2021 - link

It's a type from Intel on the slides that you unfortunately propagated.Should be 1KByte not 1Kb (as in 1 Kbit).

yeah this presentation was not one of intel's finest moment...

just read the full spec ere: https://software.intel.com/content/www/us/en/devel...

There is significantly more detail in the full documentation. all sorts of limitation on number of rows (max 16) for instance which complicates INT8 matrices just as an example... What I would have liked would be to be able to is to fully configure # of rows and # of columns within the 1KByte for a given data type - to fully use each T register 1KByte size. We now need to have rectangular NxM matrix tiles instead of the preferable square NxN matrix tiles (and fit them into 16xM = 1024 bytes, solve for M)- symmetric N x N tiles makes algorithms easier...

SystemsBuilder - Tuesday, August 31, 2021 - link

Ian, to be clear the intel AMX specs in the intel doc:https://software.intel.com/content/www/us/en/devel... spends entire chapter 3 (25 pages) discussing AMX in detail. Stating multiple times that each T register is 1KByte and the whole register files size is 8KByte, also detailing each assembly Instruction etc.Additionally, first rev of this document was published last summer and the latest rev was published in June this year. During this whole time the T register 1KByte size have never changed (but more details have been included with each revision the past 12 months).

Further, glibc and various compliers have already included AMX extensions based on this spec. it would be quite catastrophic for them if intel suddenly cut the T reg size to 1024 bits.

Also, T reg size is not really new news. https://fuse.wikichip.org/news/3600/the-x86-advanc... published a pretty good article already last summer about this (also stating T regs are 1Kbyte).

Lastly, it makes no logical sense to only have 1024bit (128Bytes) tile regs because it is just too small.

Hence, you can safely assume that intel messed up on the slide and adjust your article accordingly. If you still don't believe it, ask intel yourself.

schujj07 - Tuesday, August 31, 2021 - link

One of the rumors for Gen 4 Epyc is 12 channels of DDR5. Now this is just a rumor so it HAS to be taken with a grain of salt. However, if Epyc goes 12 channels, Arm goes 12 channels, and SPR is at 8 channels we could see another instance like Gen 1 & 2 Xeon Scalable not having RAM parity. While going DDR5 does increase the bandwidth, I don't think it does enough to justify not increasing the channels at the same time.JayNor - Wednesday, September 1, 2021 - link

The four stacks of HBM, each with 8 channels DDR should take care of Intel's bandwidth issues for AI operations.schujj07 - Wednesday, September 1, 2021 - link

Bandwidth might be OK for AI with HBM on SPR. One thing to remember is that most of these are going to be running on hypervisors. 6 channel RAM became immediately an issue with Xeon Scalable (especially with their old 1TB/socket limit without L series CPUs where you could only get 768GB RAM). If they only have 8 channels when everyone else has 12 channels you cannot put as much RAM into a system for cheap. Most servers are dual socket and if you are using a hypervisor RAM capacity matters A LOT. If you can have 1.5TB (dual socket with 64GB DIMMs) instead of 1TB (dual socket with 64GB DIMMs) that makes a huge difference for running VMs. All the hosts in my datacenter run with 1TB RAM & dual 32c/64t CPUs. We are not CPU limited but we are RAM limited on each host. While VMware can do RAM compression/ballooning, once you start over provisioning RAM you will start running into performance issues. I've read that after about 10-15% over provisioning on RAM you start getting pretty major performance loss. I've experienced VMs basically stall out (like what happened in the early 2000s when your computer used 512MB RAM and you only had 384MB RAM) at a 50% over provision. Basically depending on the workload bandwidth isn't everything.Spunjji - Tuesday, September 7, 2021 - link

At what cost, though?schujj07 - Tuesday, September 7, 2021 - link

If you have to ask you cannot afford it.