Samsung's new 200MP HP1 Sensor: Sensible, or Marketing?

by Andrei Frumusanu on September 23, 2021 10:00 AM EST- Posted in

- Mobile

- Smartphones

- Camera Sensors

- Sensors

- Samsung HP1

This week, Samsung LSI announced a new camera sensor that seemingly is pushing the limits of resolution within a mobile phone. The new S5KHP1, or simply HP1 sensor, pushes the resolution above 200 megapixels, almost doubling that of what’s currently being deployed in contemporary hardware in today’s phones.

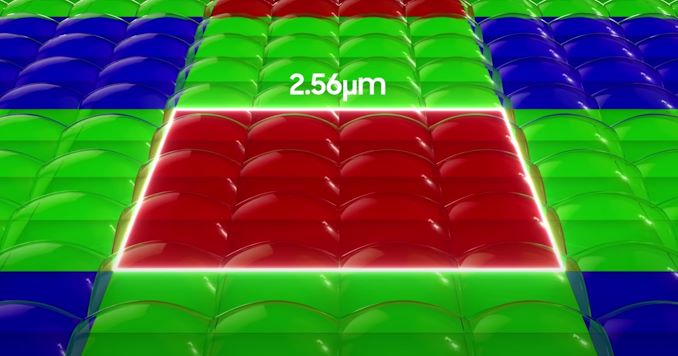

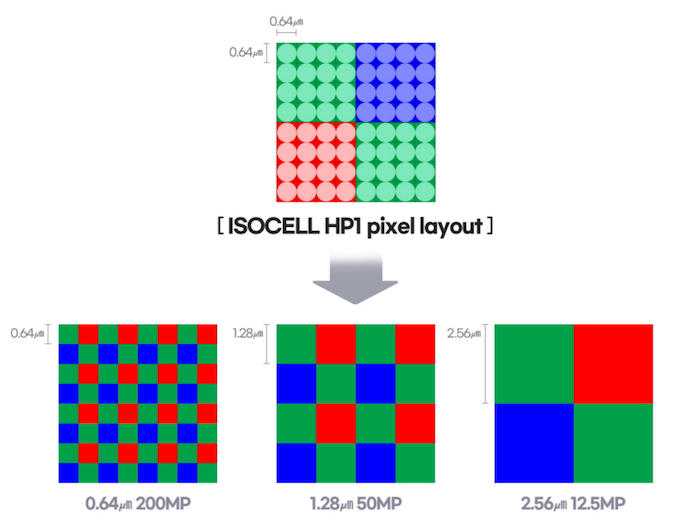

The new sensor is interesting because it marks an implementation of a new binning mechanism, beyond the currently deployed Quad-Bayer (4:1 pixel binning) or “Nonapixel” (9:1 binning), but a new “ChameleonCell” binning mechanism that is both able to employ 4:1 binning in a 2x2 structure, as well as 16:1 binning in a 4x4 structure.

We’ve been familiar with Samsung’s 108MP sensors for a while now as they’ve seen adoption for over two years both in Samsung Mobile phones as well as in Xiaomi devices, although in slightly different sensor configurations. The most familiar implementation is likely the HM1 and HM3 modules in the S20 Ultra and S21 Ultra series, which implement the “Nonapixel” 9:1 pixel binning technique to aggregate 9 pixels into 1 for regular 12MP captures in most scenarios.

One problem with the 9:1 scheme was that you’re effectively having to magnify 3x in relation between the two resolution modes, and for devices such as the S21 Ultra, this was mostly a redundant mode of functioning as the phone had a dedicated 3x telephoto module to achieve a similar pixel spatial resolution, at a larger pixel size.

8K video recording was a use-case for when the native 108MP resolution made sense, however even here the problem is that the native resolution is far above the required 33MP for 8K video, meaning the phone had to suffer a very large field of view crop as it didn’t support resolution super sampling down from 108MP to 33MP.

| Sensor Solution Comparisons | |||||||||

| Optics | Sensor | ||||||||

| 35mm eq. FL |

FoV (H/V/D) |

Aperture - Airy Disk |

Resolution | Pixel Pitch |

Pixel Res. |

Sensor Size |

|||

| HP1 (Theoretical) |

24.17 | 71.2° 56.5° 83.7° |

~f/1.9 - 1.15µm |

201.3M native (16384 x 12288) 50.3M 2x2 bin (8192 x 6144) 12.6M 4x4 bin (4096 x 3072) |

0.64µm 1.28µm 2.56µm |

15.2″ 30.4" 60.9″ |

1 / 1.22" 10.48mm x 7.86mm 82.46mm² |

||

| HM3 (S21 Ultra) |

24.17 | 71.2° 56.5° 83.7° |

f/1.8 - 1.09µm |

108.0M native (12000 x 9000) 12.0M 3x3 bin (4000 x 3000) |

0.8µm 2.4µm |

21.4″ 64.1″ |

1 / 1.33" 9.60mm x 7.20mm 69.12mm² |

||

| GN2 (Mi 11 Ultra) |

23.01 | 73.9° 58.9° 86.5° |

f/1.95 - 1.18µm |

49.9M native (8160 x 6120) 12.5MP 2x2 bin (4080 x 3060) |

1.4µm 2.8µm |

32.6″ 65.2″ |

1 / 1.12" 11.42mm x 8.56mm 97.88mm² |

||

| S21U 3x Telephoto |

70.04 (4:3) |

27.77° 21.01° 34.34° (4:3) |

f/2.4 - 1.46µm |

10.87M native (3976 x 2736) 9.99M 4:3 crop (3648 x 2736) 12M scaled (4000 x 3000) |

1.22µm | 27.4″ | 1 / 2.72" 4.85mm x 3.33mm 16.19mm² |

||

| S21U 10x Telephoto |

238.16 (4:3) |

8.31° 6.24° 10.38° (4:3) |

f/4.9 - 5.97µm |

10.87M native (3976 x 2736) 9.99M 4:3 crop (3648 x 2736) 12M scaled (4000 x 3000) |

1.22µm | 8.21″ | 1 / 2.72" 4.85mm x 3.33mm 16.19mm² |

||

The new HP1 sensor now has two binning modes: 4:1 and 16:1. The 4:1 mode effectively turns the 201MP native resolution to 50MP captures, and when cropping into a 12.5MP view frame, would result in a 2x magnification which would be more in line with what we’re used to in Quad-Bayer sensors. In fact, the results here would be pretty much in line with Quad-Bayer sensors as the native colour filter of the HP1 is still only 12.5MP, meaning a single R/G/B filter site covers 16 native pixels.

A 4:1 / 2x2 binning mode is more useful, as generally the quality here is still excellent and allows mobile vendors to support high-quality 2x magnification capture modes without the need of an extra camera module, it’s something that’s being used by a lot of devices, but had been missing from Samsung’s own 108MP 3x3 binning sensors because of the structure compromise. Samsung LSI here even states that the HP1 would be able to achieve 8K video recording with much less of a field of view loss due to the lesser cropping requirements.

200MP - Potentially pointless?

This leads us to the actual native resolution of the sensor, the 201MP mode; here, native pixel pitch of the sensor is only 0.64µm, which is minuscule and the smallest we’ve seen in the industry. What’s also quite odd here, is that the colour resolution is spatially 4x lower due to the 2.56µm sized colour filter, so the demosaicing algorithm has to do more work than the usual Quad-Bayer or Nonapixel implementations we’ve seen to date.

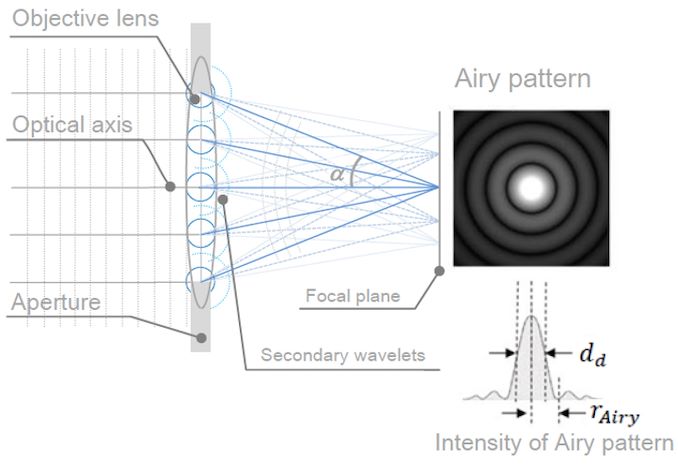

Source: JEOL

At such small pixel pitches, we’re running into different problems, and that is the diffraction limit. Usually, such large sensors are employed as the “wide” angle module so they typically have apertures between f/1.6 and f/1.9 – the HP1 is a 1/1.22” optical format sensor that’s 19% larger than the HM3 in the S21 Ultra, so maybe a f/1.9 aperture is more realistic. The maximum intensity centre diameter of the airy disk at f/1.9 would be 1.15µm, and generally we tend to say that the diffraction limit where spatial resolution gets noticeably degraded is at twice the size of that – around 2.3µm, that’s well beyond the 0.64µm pixel size of the sensor.

The 200MP mode might have some slight benefits and be able to resolve things better than 50MP, however I very much doubt we’ll see much advantages over current 108MP sensors. In that sense, it appears to me to be a pretty pointless mode.

Top: 3.76µm native pixels at f/6.4 (7.8µm airy disk - 2.07x ratio) - Original

Bottom: 1.22µm native pixels at f/4.9 (5.9µm airy disk - 4.83x ratio) - Original

That being said, we do actually see camera implementations in the market that are far above the diffraction limit in terms of their sensor pixel size and optics. For example, above I took out two 1:1-pixel crops – one from an actual camera and lens, and one from the Galaxy S21 Ultra’s periscope module. In theory, we should be seeing somewhat similar resolution, glass optics quality aside, however it’s evident that the S21U is showcasing a far lower actual spatial resolution. One big reason here is again the diffraction limit, where the S21U’s periscope at 1.22µm pixels and f/4.9 aperture has an airy disk of 5.9µm, or 4.82x the size of the pixel, meaning that physically the incoming light cannot be resolved more than at a quarter the resolution of the actual sensor.

To be able for the HP1 sensor to take advantage of its 200MP mode, it would need to have a very large aperture optics to avoid diffraction, a very high-quality glass to actually even resolve the details, and to not be in very demanding high dynamic range scenarios, due to the extremely low full well capacity of the small pixels.

To alleviate dynamic range concerns, Samsung does state that the sensor has the newest technologies included: better deep trench isolation (“ISOCELL 3.0”) should increase the full well capacity of the pixels, while also support dual gain converters (Smart-ISO Pro) as well as staggered HDR capture.

The one thing the sensor would lack in terms of more modern features is full-sensor dual pixel autofocus, with Samsung noting it instead uses “Double Super PD”, with double the dedicated PD sites as the existing Super PD implementations such as on the HM3.

Overall, the HP1 seems interesting, however I can’t shake it off that the 200MP mode of the sensor will have very little practical benefits. In theory, a device would be able to cover the magnification range from 1x to ~5x with quite reasonable quality and maybe avoid having a dedicated mode in that focal length, however we’ll have to see how vendors design their camera systems around the sensor. The new 2x2 binning mode however is welcome, simply due to the fact that it’s a lot more versatile than the 3x3 mode in current 108MP sensors, and should allow real world large benefits to the camera experience, even if the native 200MP mode doesn’t pan out as advertised.

44 Comments

View All Comments

drajitshnew - Saturday, September 25, 2021 - link

Well we are pretty good already at making whole computer generated words. Why bother with the cost size and complexity of a physical camera.Take a few potraits with something like Sony A7r4 or a1, with a 50 mm or 85 mm prime, then your phone users map coordinates, and generates a series of super hi quality selfies.

No need to wait for the golden hour or the Blue hour, no need to scramble into awkwards positions, just GLORIOUS AI.

GeoffreyA - Sunday, September 26, 2021 - link

Even better. It'll anticipate what sort of a picture you're looking for. Feeling ostentatious? Wanting to show off your holidays? No sweat. It'll pick up your mood just like that, and composite you with the Eiffel Tower at the edge of the frame. As an extra, another of your sipping coffee in Milan. All ready to upload to Facebook and the rest, hashtags included.ezrahabib - Thursday, September 23, 2021 - link

Samsung mobile is launching a new plus interesting feature and it's suitable for the marketing. Recently, I started new eCommerce online marketing, and where I hired a (https://www.webstudios.ae/) is a top-drawer professional website design company based in the United Arab Emirates known for providing hassle-free web designs with attention-capturing features to attract high-quality organic traffic to your website. Their digital services include on-demand business mobile apps, web development, web design, and 2D/ 3D video animation design services.kupfernigk - Thursday, September 23, 2021 - link

I have to admit I do not understand the article and I would like someone to explain it to me.There are 4 pictures showing 4 different colour filter layouts. Which one is correct? The colour filter is fixed for a given sensor.

If the colour filter has 2.56 micrometre square resolution per RGB colour, then the effective "real" pixel size is 5.15 micrometres on a side, which is what is needed to produce a single [r,g,b] colour point. This means that the real resolution is about 3Mpx, i.e.that is the number of points that can be assigned an [r,g,b] intensity. The "HP1" diagram suggests this is actually the case. On the other hand the left hand image shows [r,g,b] points 1.12 micrometres square, for 50Mpx resolution, though the real resolution would be a quarter of that, 12Mpx.

It would be helpful if the author could clarify. I admit my optics is long out of date - I was last involved with semiconductor sensors 35 years ago - but the laws of optics haven't changed in the meantime.

Pixel binning AIUI is a technique for minimising the effect of sensor defects by splitting subpixels into groups and then discarding outlying intensities in each group; at least that was what we were doing in the 1980s. Is this now wrong?

Andrei Frumusanu - Thursday, September 23, 2021 - link

All camera sensors do not have color resolution that is equal to their advertised pixel resolution, all colour filter sites are end up being actual logical pixels in the resulting image, however they take colour information of the adjacent pixels to get to your usual RGB colour, the process is called demosaicing or de-Bayering.In the graphic, only the top image showcases the physical colour filter, which is 2.56 microns. The below ones are supposed to showcase the "fake" logical Bayer output that the sensor sends off to the SoC.

Frenetic Pony - Thursday, September 23, 2021 - link

I'm glad to see someone take diffraction limit and such into account. It's like some Samsung exec is sitting in their office demanding more pixels without regard to any other consideration whatsoever. So the underlings have to do what they're told.eastcoast_pete - Thursday, September 23, 2021 - link

Definitely marketing! For all the reasons listed in the article and some comments here. Reminds me of what is, in microscopy, referred to as "empty magnification". The other question Samsung doesn't seem to have an answer to is why they also make the GN2 sensor? If Samsung gets smart, they use that sensor in their new flagship phone. This 200 MP sensor is nonsensical at the pixel sizes it has and the loss of area to borders between pixels. If that sensor would be 100 times the area and then used in a larger format camera, 200 MP can make sense; in this format and use, it doesn't.GC2:CS - Friday, September 24, 2021 - link

I do not understand the premise.In my knowledge pretty much all smartphone camers are diffraction limited. For example iPhone telephoto has 1 micron pixels which is not sufficient for 12MP resolution.

melgross - Tuesday, September 28, 2021 - link

I believe it’s 1.7.eastcoast_pete - Friday, September 24, 2021 - link

I also wonder if that approach (many, but tiny pixels) also makes it easier for Samsung to still use imaging chips with significant numbers of defects. The image captured by this and similar sensors that must bin to work is heavily processed anyway, so is Samsung trying to reduce the number of defective chips to death by sheer pixel numbers? In contrast, defective pixels are probably more of a fatal flaw for a sensor with large individual pixels like the GN2. Just a guess, I don't have inside information, but if anyone knows how many GN2 chips are rejected due to too many defects, it may shed some light on why this otherwise nonsensical sensor with a large number of very tiny pixels exists. Could be as simple as manufacturing economics. Never mind what that does to image quality.