Isolated Internet Outages Caused By BGP Spike

by Brett Howse on August 14, 2014 7:00 PM EST- Posted in

- Networking

- Cisco

- router

The day was Tuesday, August 12th 2014. I arrived home, only to find an almost unusable internet situation in my home. Some sites such as AnandTech and Google worked fine, but large swaths of the internet such as Microsoft, Netflix, and many other sites were unreachable. As I run my own DNS servers, I assumed it was a DNS issue, however a couple of ICMP commands later and it was clear that this was a much larger issue than just something affecting my household.

Two days later, and there is a pretty clear understanding of what happened. Older Cisco core internet routers with a default configuration only allowed for a maximum 512k routes for their Border Gateway Protocol (BGP) tables. With the internet always growing, the number of routes surpassed that number briefly on Tuesday, which caused many core routers to be unable to route traffic.

BGP is not something that is discussed very much, due to the average person never needing to worry about it, but it is one of the most used and most important protocols on the internet. The worst part of the outage was that it was known well in advance that this would be an issue, yet it still happened.

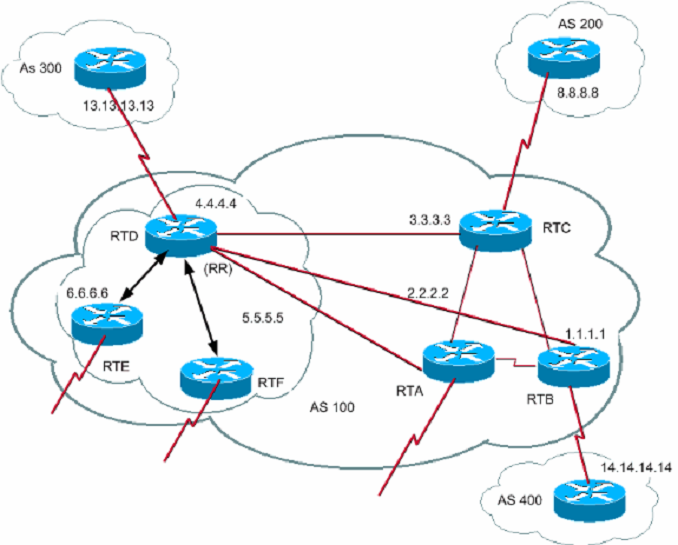

Let us dig into the root cause. Most of us have a home network of some sort, with a router, and maybe a dozen or so devices on the network. We connect to an internet service provider through (generally) a modem. When devices on your local network want to talk to other devices on your network, they do so by sending packets upstream to the switch (which is in most cases part of the router) and then the switch forwards the packet to the correct port where the other device is connected. If the second device is not on the local network, the packets get sent to the default gateway which then forwards them upstream to the ISP. At the ISP level, in simple terms, it works very similarly to your LAN. The packet comes in to the ISP network, and if the IP address is something that is in the ISP’s network, it gets routed there, but if it is something on the internet, the packet is forwarded. The big difference though is that an ISP does not have a single default gateway, but instead connects to several internet backbones. The method in which internet packages are routed is based on the Border Gateway Protocol. The BGP contains a table of IP subnets, and lists which ports to transfer traffic based on rules and paths laid out by the network administrator. For instance, if you want to connect to Google to check your Gmail, your computer will send a TCP connection to 173.194.33.111 (or another address as determined by your DNS settings and location). Your ISP will receive this packet, and send the packet to the correct port to an outbound part of the internet which is closer to the subnet that the address is in. If you then want to connect to Anandtech.com, the packet will be sent to 192.65.241.100, and the BGP protocol of the ISP router will then send to possibly a different port. This continues upstream from core router to core router until the packet reaches the destination subnet, where it is then sent to the web server.

With the BGP tables being overfilled on certain routers in the chain, packets send to specific routers would then be dropped at some point in the chain, meaning you would not have any service.

The actual specifics of what happened seemed to be that Verizon unintentionally added approximately 15,000 /24 routes into the global routing table. These prefixes were supposed to be aggregated, but this didn’t happen, and as such, the total number of subnet prefixes in the table spiked. Verizon fixed the mistake quickly, but it still caused many routers to fail.

Although you could be quick to jump and blame Verizon for the outage, it has to be noted that Cisco issued a warning to customers explaining that the memory which is allocated for the BGP table would be very close to being full, and gave specific instructions on how to correct it. This warning came several months ago. Unfortunately not all customers of Cisco heeded or received the warning, which caused the brief spike to cripple parts of the internet.

Newer Cisco routers were not affected, because the default configuration for the TCAM memory which is designated for the BGP table allows for more than 512,000 entries. Older routers from Cisco have enough physical memory for up to 1,000,000 entries, assuming the configuration was changed as outlined by Cisco.

The effects of outages like this can be quite potent on the internet economy, with several online services being unavailable for large parts of the day. However this outage doesn’t need to happen again, even though the steady state number of entries in the BGP table will likely exceed magic 512,000 number again. Hopefully with this brief outage, lessons can be learned, and equipment can be re-configured or upgraded which will prevent this particular issue from rearing its head again in the future.

Sources

12 Comments

View All Comments

juampavalverde - Thursday, August 14, 2014 - link

I'm happy that now i can understand what happened and why! Netacad FTW!bigrollinnoob - Saturday, August 16, 2014 - link

This is CCNP level stuff I believe, are you a CCNP?TheJian - Sunday, August 17, 2014 - link

You don't have to be a CCNP to learn this stuff (IE you don't have to pass a test to watch training vids and play with racks at work if the company wants you to learn something). The site he's referencing is the cisco networking academy. Why would you care how he knows or even if he does to begin with? Is he required to prove something here for making a comment?Thermogenic - Thursday, August 14, 2014 - link

The affected routers need to be replaced. The change support the larger number of IPv4 routes greatly compromises IPv6 (only 8,000 routes).thewishy - Friday, August 15, 2014 - link

Odds are older routers / l3 switches aren't doing IPv6, and they'd be replaced anyway if you were running an IPv6 rollout.coburn_c - Thursday, August 14, 2014 - link

It took about 2 weeks for me and my fellow CCNA lab-mates to realize that Cisco software and spec is absolutely terrible. Cisco designs for one thing, longevity. Now we have the entire world running on mediocre specced equipment using proprietary and laughable technologies -- that will never die.Kougar - Friday, August 15, 2014 - link

I hope they do learn. The Santikos site was unreachable for hours , and because Google/Fandango both have no concept of AVX screenings nor mention which screenings are reserved seating only the local mega-theater lost my business on Tuesday.keristerzt - Friday, August 15, 2014 - link

Thanks for this topic, and now I know why several days ago, my network seems down but I don't know why then I go check the router it says "out of memory" or somethings and I was like "Wut, what the hell is this?!" I was so busy so I leave it there and a day later it's back to normal.themeinme75 - Friday, August 15, 2014 - link

thank you for the concise info. I thought it would end in the NEAR futureDNABlob - Sunday, August 17, 2014 - link

This is something that could happen to any vendor. Cisco just happens to be very popular and has TCAM configuration that are a bit too optimistic about IPv6 ramp-up.The really unfortunate part is that the 7600/6500 platforms are still actually quite a good fit for network-edge for most folks and have an attractive pricing model if they got a TCAM+Backplane upgrade. The 6800 line is the successor to the 6500 line and will provide that, eventually. Right now it shares the same management and interface cards as the 6500, but will get newer cards with more TCAM and higher backplane throughput. This hasn't happened yet, though.

I say this is unfortunate because, for a lot of Cisco customers, the next-step up gets a lot more expensive. ASR 9000s are really nice boxes, but they're not cheap either and you pay support per-card, not per-chassis. Nexus 7000s are also nice boxes, but they're not cheap either and the cards that make them a good network-edge router get even more expensive (M2 series cards)